Improving AI performance

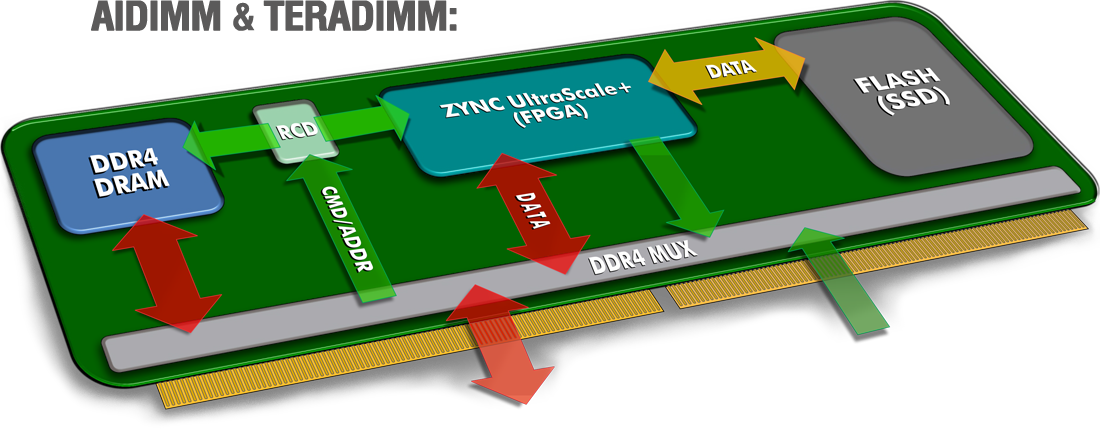

- Developed two new products: AIDIMM & TERADIMM

- Invented an interconnect architecture with significantly lower latency than PCIe interface

- Moved AI processing (AIDMM) and SSD storage (TERADIMM) to the DRAM interface

- Developed two new products: AIDIMM & TERADIMM

- Invented an interconnect architecture with significantly lower latency than PCIe interface

- Moved AI processing (AIDMM) and SSD storage (TERADIMM) to the DRAM interface

AIDIMM vs. Leading GPUs

ResNet-50

ResNet-50Our idea is to place a GPU on a DIMM. We call this new device an AIDIMM because we envision users to use this for AI. The AIDIMM can then be placed on a standard DRAM interface.

Instead of using traditional GPU semiconductor components, we have chosen to use an FPGA (Field Programmable GateArray).

The FGPA can implement the AI/DL algorithms just like a GPU plus the FPGA has the added flexibility and programmability to implement our new interconnect architecture.

Over 2x Latency Improvement

Our Story

AI Plus started with the bold idea of improving AI performance.

Today, GPU’s are used for AI/DL processing.We discovered that the main bottleneck for the GPU’s was the PCIe interconnect technology.

Combining our background, experience and expertise in memory technology, we developed a new interconnect architecture that allows us to move the GPU to the DRAM interface. This allows for significantly lower latency than the PCIe interface.

We also discovered that SSD storage could be moved to the DRAM interface resulting in better data storage and access with lower latency.

This allows us to implement our new interconnect architecture with existing, off-the-shelf semiconductors, keeping the cost down and providing faster time to market.

With this in mind, we developed two groundbreaking AI solutions:- AIDIMM

- TERADIMM

Use Cases

AI Plus architecture can be used to empower the following industries…

AI Security

One of the biggest challenges that major national retailers such as Walmart have to deal with is ‘shrinkage’ due to theft.

Every year around 1.3% of retail revenues in the US is reportedly lost due to this common form of fraud, representing a total loss of $47 billion according to the National Retail Federation.

[Read more]

Fraud Detection

Global transaction losses in the United States due to credit card fraud topped $25 billion in 2019.

Financial service companies such as VISA are using AI and Machine Learning to detect and flag transactions in real time that do not match a cardholder’s profile and spending patterns.

[Read more]

Development System

- 24 DIMM Slots

- Dual Processor

- Intel Xeon Scalable

- Data transfer latency

- Data transfer throughput

- ResNet-50 latency